[ad_1]

Late last year I told you about Amazon EC2 P3 instances and also spent some time discussing the concept of the Tensor Core, a specialized compute unit that is designed to accelerate machine learning training and inferencing for large, deep neural networks. Our customers love P3 instances and are using them to run a wide variety of machine learning and HPC workloads. For example, fast.ai set a speed record for deep learning, training the ResNet-50 deep learning model on 1 million images for just $40.

Raise the Roof

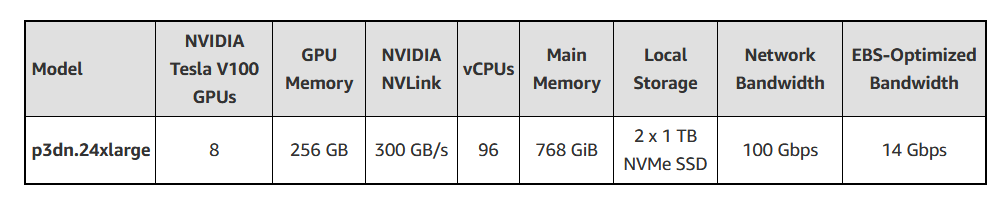

Today we are expanding the P3 offering at the top end with the addition of p3dn.24xlarge instances, with 2x the GPU memory and 1.5x as many vCPUs as p3.16xlarge instances. The instances feature 100 Gbps network bandwidth (up to 4x the bandwidth of previous P3 instances), local NVMe storage, the latest NVIDIA V100 Tensor Core GPUs with 32 GB of GPU memory, NVIDIA NVLink for faster GPU-to-GPU communication, AWS-custom Intel® Xeon® Scalable (Skylake) processors running at 3.1 GHz sustained all-core Turbo, all built atop the AWS Nitro System. Here are the specs:4

| Model | NVIDIA V100 Tensor Core GPUs | GPU Memory | NVIDIA NVLink | vCPUs | Main Memory | Local Storage | Network Bandwidth | EBS-Optimized Bandwidth |

| p3dn.24xlarge | 8 | 256 GB | 300 GB/s | 96 | 768 GiB | 2 x 900 GB NVMe SSD | 100 Gbps | 14 Gbps |

If you are doing large-scale training runs using MXNet, TensorFlow, PyTorch, or Keras, be sure to check out the Horovod distributed training framework that is included in the Amazon Deep Learning AMIs. You should also take a look at the new NVIDIA AI Software containers in the AWS Marketplace; these containers are optimized for use on P3 instances with V100 GPUs.

With a total of 256 GB of GPU memory (twice as much as the largest of the current P3 instances), the p3dn.24xlarge allows you to explore bigger and more complex deep learning algorithms. You can rotate and scale your training images faster than ever before, while also taking advantage of the Intel AVX-512 instructions and other leading-edge Skylake features. Your GPU code can scale out across multiple GPUs and/or instances using NVLink and the NVLink Collective Communications Library (NCCL). Using NCCL will also allow you to fully exploit the 100 Gbps of network bandwidth that is available between instances when used within a Placement Group.

In addition to being a great fit for distributed machine learning training and image classification, these instances provide plenty of power for your HPC jobs. You can render 3D images, transcode video in real time, model financial risks, and much more.

You can use existing AMIs as long as they include the ENA, NVMe, and NVIDIA drivers. You will need to upgrade to the latest ENA driver to get 100 Gbps networking; if you are using the Deep Learning AMIs, be sure to use a recent version that is optimized for AVX-512.

Available Today

The p3dn.24xlarge instances are available now in the US East (N. Virginia) and US West (Oregon) Regions and you can start using them today in On-Demand, Spot, and Reserved Instance form.

Bonus – P3 Price Reduction

As part of today’s launch we are also reducing prices for the existing P3 instances. The following prices went in to effect on December 6, 2018:

- 20% reduction for all prices (On-Demand and RI) and all instance sizes in the Asia Pacific (Tokyo) Region.

- 15% reduction for all prices (On-Demand and RI) and all instance sizes in the Asia Pacific (Sydney), Asia Pacific (Singapore), and Asia Pacific (Seoul) Regions.

- 15% reduction for Standard RIs with a three-year term for all instance sizes in all regions except Asia Pacific (Tokyo), Asia Pacific (Sydney), Asia Pacific (Singapore), and Asia Pacific (Seoul).

The percentages apply to instances running Linux; slightly smaller percentages apply to instances that run Microsoft Windows and other operating systems.

These reductions will help to make your machine learning training and inferencing even more affordable, and are being brought to you as we pursue our goal of putting machine learning in the hands of every developer.

— Jeff;

[ad_2]

Source link

Recent Comments